Robots created by a team working at the University of California, Santa Barbara are able to look through solid walls using just Wi-Fi signals. With potential applications in search and rescue, surveillance, detection and archeology, these robots have the capability to identify the position and outline of unseen objects within a scanned structure, and then categorize their composition as metal, timber, or flesh.

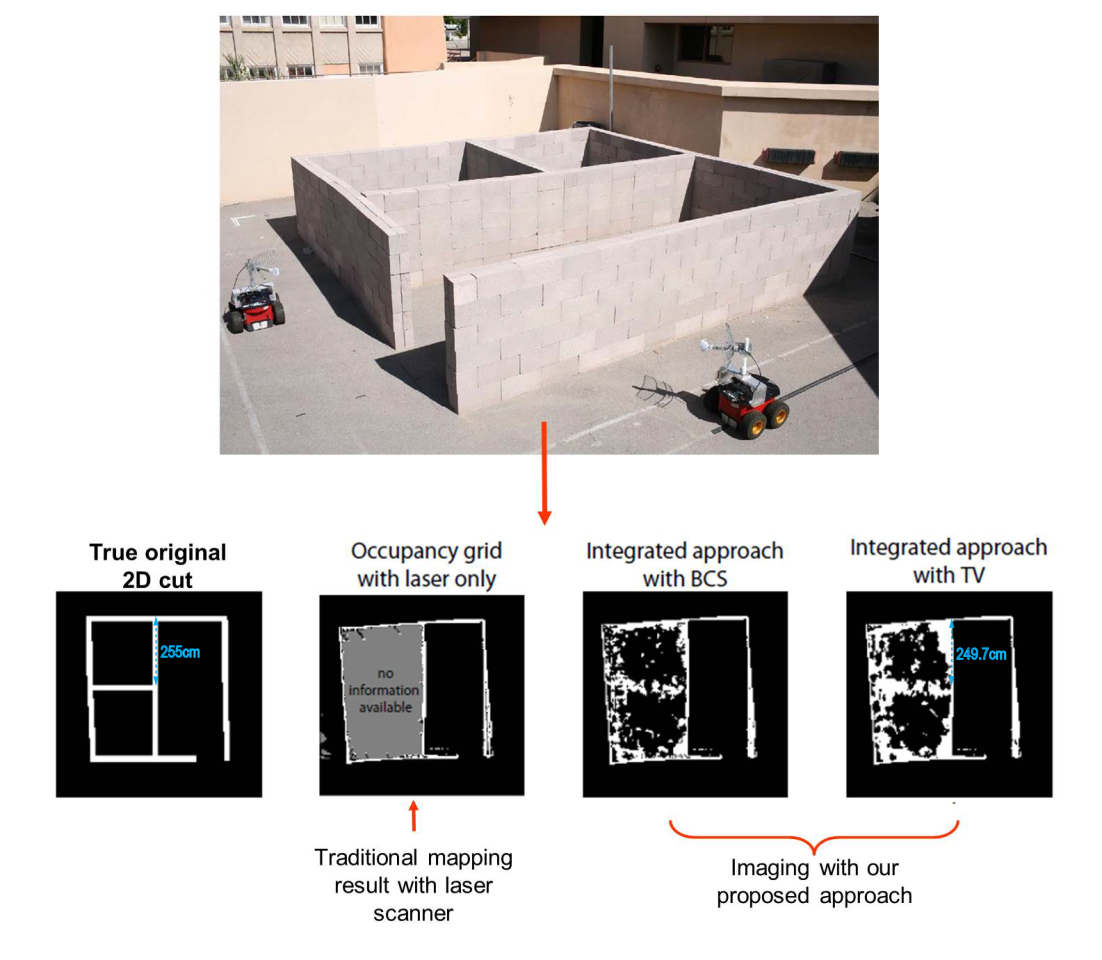

Working in pairs, the robots traverse the perimeter of an object or structure and alternately transmit and receive Wi-Fi radio signals between each other through the object being scanned. Exploiting the differences in transmitted and received Wi-Fi signal strengths to show the presence of hidden objects, the system uses a wave-propagation model with a target resolution of around 2 cm (0.8 in). By measuring the received field strengths of these wireless transmissions, the robots are able to produce an accurate map of the structure detailing where solid objects and spaces are located.

Though these aren’t the first robots that have been claimed to be able to see through concrete – the Cougar20-H surveillance robot achieved that some years ago – other systems have relied on a number of GHz-range, high-power radio sensor arrays that were essentially complex radar systems. Similarly, a fixed Wi-Fi system created by MIT was able to detect movement behind walls using Wi-Fi as its transmitter and receiver, but the resolution was too low to do more than detect movement, let alone categorize and identify objects.

The UCSB robots, however, rely solely on interpretations of Wi-Fi radio transmissions which – even though they are still of both lower strength and much lower dynamic range than higher-powered arrays – indicates that the signal processing and post-capture computation must be key to their "X-ray vision" capabilities. This is borne out in the team’s assertions that they use wavelet, total variation, and spatial domain filters and computations in their receiving equipment and processing computers, and the use of a SLAM algorithm in their on-the-fly mapping computations.

To map the co-ordinates of the area and object spatially, each robot estimates its own position and the position of the other robot based on the set speed and distance traveled using a gyroscope and a wheel encoder for positioning. Whilst this may seem to make it difficult to perform precise measurements, the robots are claimed to move in a concerted, parallel fashion around the outside of the area in a manner similar to that used in medical imaging systems where a moving transmitter is tracked by a following receiver. Which, apparently in concert with the parabolic antenna affixed to each robot, is sufficiently accurate to allow acceptable overall image resolution.

In regard to potential uses for this technology, the UCSB team sees search and rescue as the most salient field for its use. In particular, they posit the idea of using these Wi-Fi enabled robots to assist in searching through rubble in the aftermath of earthquakes to look for survivors.

Similarly, the team also moots the possibility of incorporating these techniques in the classification and detection of objects behind walls, without the need to remove or damage any structures in doing so. This, the researchers believe, would be of great assistance to such things as archeological sites, where a non-invasive mapping of the area as a precursor to digging may prove invaluable.

The researchers also say that these Wi-Fi scanning techniques may be effectively used without robots as well, by being incorporated as a static transmit/receive pairs in building detection systems to seek intruders lurking beyond walls or out of range of conventional passive infrared detectors or other surveillance sensors. It also has potential to be used in a handheld device that could be used to perform a preliminary body scan for health monitoring.

The team plans to look at other imaging applications for the technology, as well as the potential for incorporating laser guidance to enhance the spatial accuracy and improve the resolution of captured image maps.

The video below shows the UCSB robots in action.

Source: UCSB