Researchers at MIT, Harvard and the Vienna University of Technology have developed a proof-of-concept optical switch that can be controlled by a single photon and is the equivalent of a transistor in an electronic circuit. The advance could improve power consumption in standard computers and have important repercussions for the development of an effective quantum computer.

Chip manufacturers are constantly pushing to reduce power consumption to a minimum, but they still have to face the limitations that come with dealing with the world of electronics. In particular, the power consumed by a CPU is roughly proportional to its frequency and the square of its voltage, with a good portion of the consumed energy being dispersed as heat.

One promising solution could be to build circuits that use photons instead of electrons to store and elaborate data. This would drastically improve power consumption, because one photon is enough to both store a bit of information and activate a transistor. An all-optical computer could also reach much higher data transfer rates (think fiber optics).

So, why is it that we haven't built optical chips yet? The problem is that, unlike electrically charged particles, photons don't easily interact with one another: two photons that collide in a vacuum will simply pass through each other, unscathed.

How it works

Although this is by no means the first optical transistor ever created, the one proposed here is potentially much more powerful because it can be controlled by a single photon.

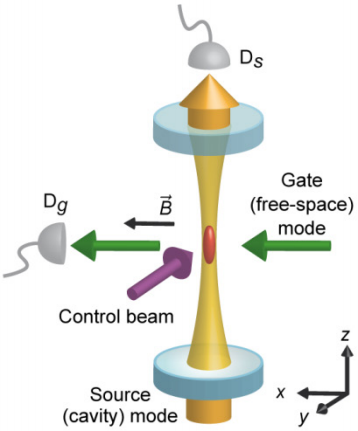

The core of the optical transistor is made out of two mirrors. Between them lies a sealed cavity containing a gas of supercooled cesium atoms. The mirrors are set at a distance which is precisely calibrated to the wavelength of light, so that they act as an optical resonator, able to bounce light back and forth between them while preserving its phase.

Light can be described as either a particle or an electromagnetic wave. In its particle description, photons simply ought to bounce off the first mirror and return to the source; however, in accordance with the wave description, light actually goes through the first mirror and enters the resonator, bouncing back and forth and eventually creating a large electromagnetic field that cancels out the effects of the two mirrors, allowing photons to pass through.

But when a single "gate photon" is fired into the resonator and hits the cesium atoms at a different angle than all the others, this changes the physics of the cavity to such an extent that only about 20 percent of the light can now get through the resonator.

In practice, the device acts as a light switch which is itself controlled by a light signal (the "gate photon") in much the same way that, in a standard transistor, a "gate voltage" can control the current flowing between the two ends of the device.

Building an optical quantum computer

As already discussed, optical computing could bring us much faster and more power-conscious CPUs in the years to come; however, important as they may be, the more interesting applications aren't with traditional computer architectures, but rather in the field of quantum computing.

Quantum computing, still in its infancy, harnesses the quirks of quantum mechanics to create more powerful computers. One of such peculiarities is superposition, the counterintuitive ability of a quantum bit ("qubit" for short) to be assume more than one value – "0" and "1" – at the same time.

Maintaining superposition is crucial, but it is very challenging because most atoms tend to interact with each other and destroy their superposition state (early quantum computer designs used ions trapped in electric fields, with mixed results at best). But photons don't easily interact with each other, meaning that superposition would be much easier to store safely in an optical quantum computer.

Quantum computing – what's it for?

Being able to switch an optical gate with a single photon opens the possibility of creating arrays of optical circuits, all of which are in a superposition state. "If the gate photon is there, the light gets reflected; if the gate photon is not there, the light gets transmitted," Vladan Vuletić at MIT, who led the work, explains. "So if you were to put in a superposition state of the photon being there and not being there, then you would end up with a macroscopic superposition state of the light being transmitted and reflected."

A small number of qubits in superposition states can carry an enormous amount of information: while one qubit alone would carry only two possible states, the number would grow exponentially with the number of photons: ten photons would carry 210, or 1,024 bits of information; thirty photons would carry a billion bits; and an array of a thousand bits would carry 10300 bits – more than there are atoms in the universe. An array of optical circuits would allow us to compute this data all at once.

However, a quantum computer shouldn't be thought of simply as a massively parallel classical computer that can bruteforce its way into an arbitrarily complex problem. The ability to compute all the possible results at once, which is known as quantum parallelism, comes with some drawbacks.

In fact, while quantum parallelism means that a quantum computer can theoretically compute, say, 1 billion results in just 30 iterations, the laws of quantum mechanics dictate that accessing one of the results automatically destroys all others states, collapsing them into the original one. Also, we cannot choose exactly which result to access: this is dictated by chance alone.

Nevertheless, computer scientists can still exploit the quirks of quantum mechanics to create more efficient algorithms, such as factoring a large number, finding an item in a large list, or even breaking RSA encryption much quicker than would be possible with a standard computer. For most applications, however, it is thought that the performance gain, if one exists, will be limited.

Where quantum computing is certain to shine, though, is the modeling of quantum systems, with countless applications in theoretical physics, chemistry, material science, and nanotechnology among other fields.

The research was detailed in a paper published on the journal Science.

Sources: MIT, Carnegie Mellon (YouTube)