A study at the University of Maryland has the potential to help movement-impaired people to control the operation of artificial limbs or computer systems without having to undergo extensive training or invasive surgery. The researchers have successfully reconstructed 3D hand movements by decoding electrical brain signals picked up from sensors placed on the scalps of volunteers.

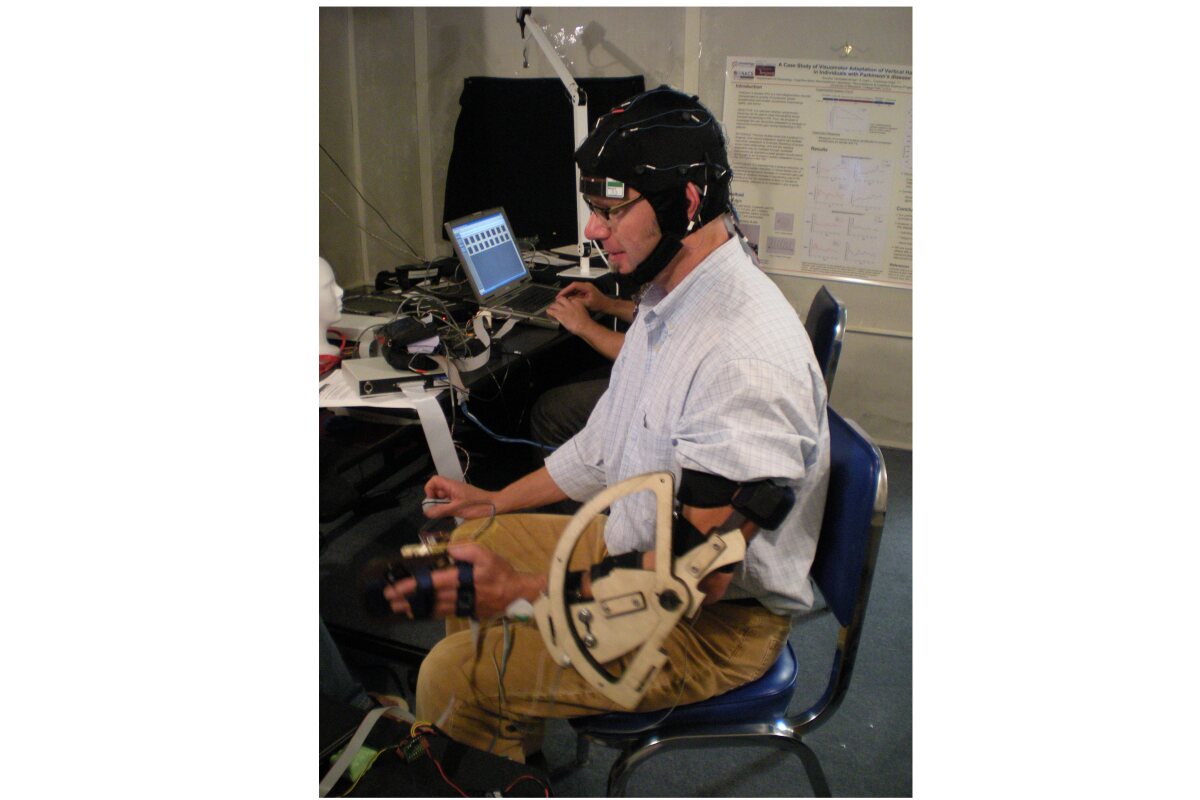

The University of Maryland researchers decoded brain signals from five volunteers asked to perform a series of random hand movements while hooked up to an electroencephalography (EEG) machine via 34 sensors around their scalps. The recorded electrical activity data was then translated and reconstructed into 3D hand movements by the team.

The researchers discovered that the sensor placed over the primary sensorimotor cortex (which is linked to voluntary movement) appeared to offer more accurate information than the others. More useful data came from the sensor located above the inferior parietal lobule, an area associated with guiding limb movement.

Team leader Jose Contreras-Vidal said: "Our results showed that electrical brain activity acquired from the scalp surface carries enough information to reconstruct continuous, unconstrained hand movements."

The brain image (above left) depicts localized sources of movement-related brain activity overlaid onto a typical magnetic resonance imaging (MRI) structural image. This activity is predictive of hand movement that will occur 60ms in the future (this timing reflects the cortical-spinal delays in the transmission of information from the central nervous system to the periphery). The middle image shows the corresponding scalp activation map of the best 34 sensors that were used to reconstruct the hand movements. This image shows a large individual contribution of a sensor (CP3) centered over left central and posterior areas of cortex (the hand movements were performed with the dominant right hand). The right figure depicts the mean finger paths for center-out-and-back movements performed by a participant in the study led by University of Maryland researchers.

It is believed that the non-invasive, portable technique could be of use to those with severe disabilities and allow them to operate such things as a motorized wheelchair, a prosthetic limb or a robotic device using only a headset containing scalp sensors and the power of thought. The findings may also result in improvements to existing EEG-based systems which facilitate computer interaction by translating brain activity so that current extensive training regimes can be reduced.