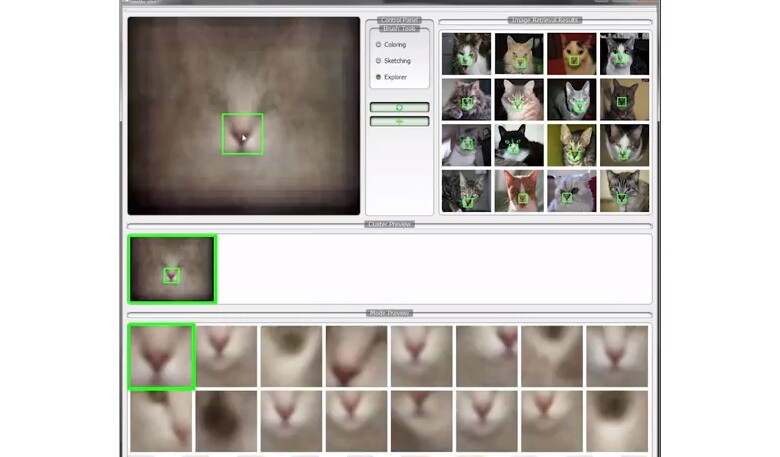

If you're trying to find out what the common features of tabby cats are, a Google image search will likely yield more results than you'd ever have the time or inclination to look over. New software created at the University of California, Berkeley, however, is designed to make such quests considerably easier. Known as AverageExplorer, it searches out thousands of images of a given subject, then amalgamates them into one composite "average" image.

The program was created by a team led by associate professor Alexei Efros. The team members were inspired by the work of artists such as James Salavon, who have created images made from hundreds of overlaid photos of subjects such as kids posing with Santa. Whereas Salavon and company manually selected, combined and aligned their images, however, AverageExplorer does so automatically.

Users can refine their search criteria either by using more specific terms, or just by selecting a specific region of the average image – an example could involve selecting the nose on the average cat image, resulting in a new average image that's weighted more toward matching up noses.

"Visual data is among the biggest of Big Data," said Efros. "We have this enormous collection of images on the web, but much of it remains unseen by humans because it is so vast. People have called it the dark matter of the internet. We wanted to figure out a way to quickly visualize this data by systematically 'averaging' the images."

Suggested uses for the technology include allowing online shoppers to home in on the exact product they want, as they could start with an average image based on fairly loose search criteria, then keep refining their search until they arrive at an image of what best suits their needs. That final average image might be based on just a few contributing images, which the shopper could then look up.

Additionally, the software could be used to train computer vision systems. Ordinarily, such systems are trained by humans who must manually mark distinguishing features of a subject (such as the eyes, nose and mouth of a face) on a large number of individual images that go into a database. Using AverageExplorer, however, if a feature on an average image is marked, then that feature will also automatically be marked in all of the contributing images.

More details on how AverageExplorer works can be found in the following video.

Source: University of California, Berkeley