It is a commonly held myth that much of the effectiveness of communication is determined by nonverbal cues, but try telling that to someone who has lost the power of speech due to brain injury or damage to their vocal cords or airway. In a move that could help restore communication for people in this situation, researchers at the Washington University School of Medicine in St. Louis have successfully used regions of the brain that control speech to "talk" to a computer through the implantation of a temporary surgical implant. The patients were able to manipulate a cursor on a computer screen simply by saying or thinking of a particular sound.

The implanted brain-computer interfaces (BCIs) under study by the researchers have typically been programmed to detect activity in the brain's motor networks (which control muscle movements) in efforts to restore lost mobility through the control of an external device such as a robotic arm or wheelchair. Recognizing that the same methods could also have applications for restoring communication the researchers adapted the technology to allow patients to communicate through a computer using the same areas of the brain they once engaged to use their own voices.

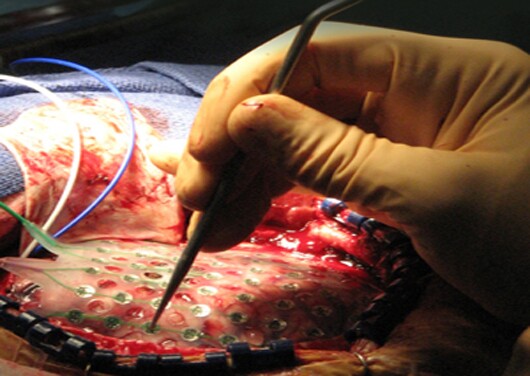

The implants being examined by the researchers are temporarily installed directly on the surface of the brain in epilepsy patients to identify the source of persistent, medication-resistant seizures and map those regions for surgical removal. Eric C. Leuthardt, MD, and his colleagues at the Washington University School of Medicine recently revealed that the implants can be used to analyze the frequency of brain wave activity, allowing them to make finer distinctions about what the brain is doing.

For their study, the researchers applied this technique to detect when patients say or think of four sounds: oo, as in few; e, as in see; a, as in say; and a, as in hat. The implants allowed the scientists to identify the brainwave patterns that represented these sounds and programmed the interface to recognize them. The patients were quickly able to learn to control a computer cursor by thinking or saying the appropriate sound.

Leuthardt says that, in the future, BCIs could be tuned to listen to just speech networks or both motor and speech networks to let a disabled patient use both his or her motor regions to control a cursor on a computer screen and imagine saying "click" when they want to click on the screen.

"We can distinguish both spoken sounds and the patient imagining saying a sound, so that means we are truly starting to read the language of thought," he says. "This is one of the earliest examples, to a very, very small extent, of what is called 'reading minds' — detecting what people are saying to themselves in their internal dialogue."

The Washington University School in St. Louis researchers are now working on ways to distinguish "higher levels of conceptual information."

"We want to see if we can not just detect when you're saying dog, tree, tool or some other word, but also learn what the pure idea of that looks like in your mind," he says. "It's exciting and a little scary to think of reading minds, but it has incredible potential for people who can't communicate or are suffering from other disabilities."