It may be based on apparently familiar technology, but Y Combinator startup Matterport reckons it's putting its 3D scanning technology, which it claims can scan real environments into 3D digital representations 20 times faster than the competition, to innovative use.

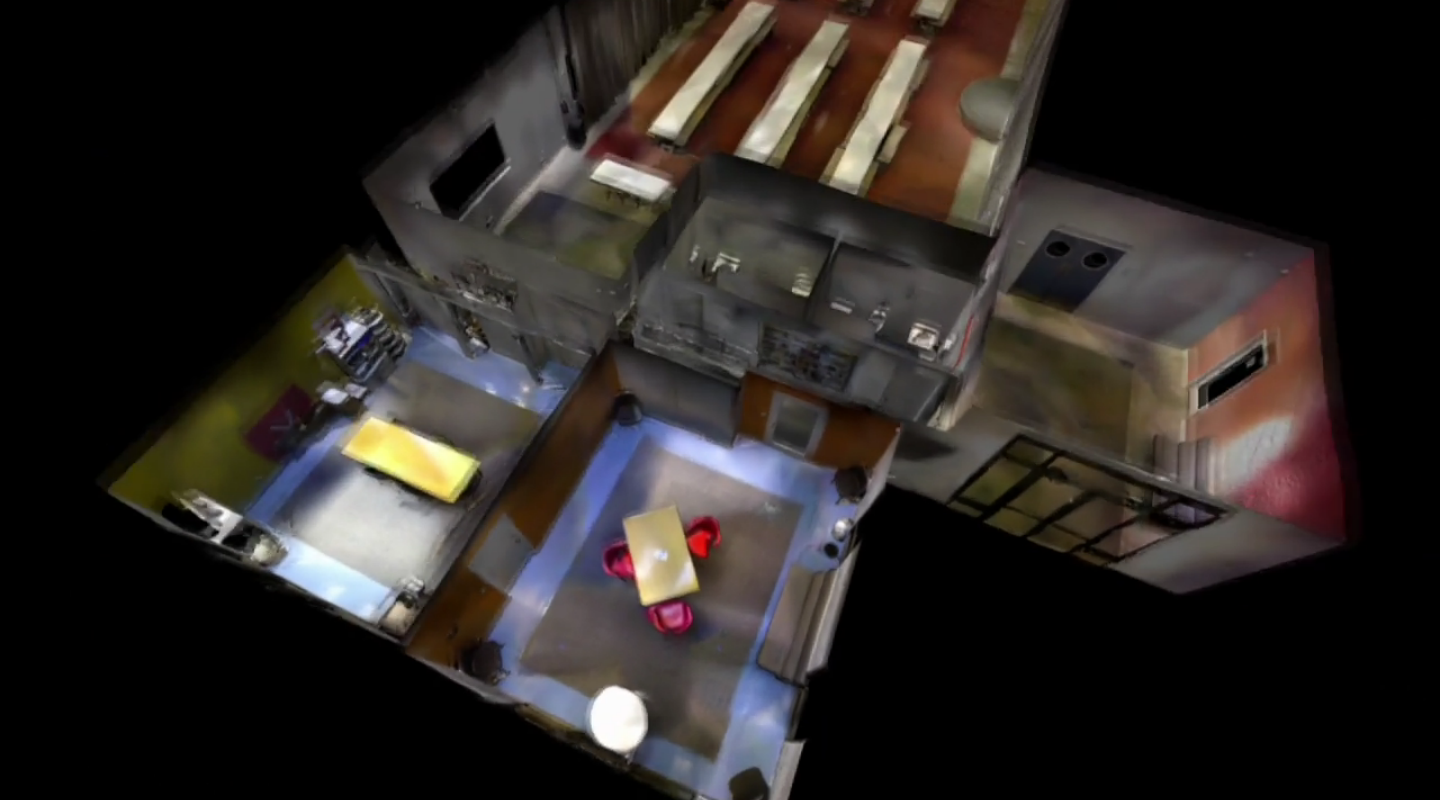

"We turn reality into 3D models and our scanner is 20 times faster and 18 times cheaper than any other tool on the market," Matterport co-founder Michael Beebe claimed at the Y Combinator 2012 demo day at the end of March. And though that claim might be pushing it slightly - 3D scanners have been around for the better part of two decades - the technology demonstrated in Matterport's demo video is remarkable.

The handheld scanner, which at first glance might be mistaken for a Kinect sensor, is simple waved at the object or interior environment to be scanned in such a way as takes in the object's entire surface. The technology is not only speedy, but also easy, apparently requiring no precision in use.

But so far there has been precious little hard information on how the Matterport scanner actually works - and their demo video is curiously lacking in in-focus close-up shots of the scanner itself. But two things appear to be clear: the scanner does not appear to emit any so-called visible light, and in addition to capturing 3D forms, it is able to apply relatively accurate colors and patterns to the surfaces, reflecting the real object's appearance.

An old website for the technology, before the project was renamed Matterport, reveals that a Kinect sensor was indeed used as the basis for early prototypes, and though the scanner featured in Matterport's promo is clearly not a Kinect sensor, it seems more than likely that the same principles are at work, with two infrared laser depth sensors for depth and form sensing, and an RGB camera for detail. But to get from a Kinect sensor to the technology apparently on display in the promotional video must require some serious software to back it up.

Matterport is currently working with a handful of "beta partners" in fields such as real estate and video games development. We've reached out for more technical info on what makes this tick, and if we find out more you'll be the first to know. Check out Matterport's promo video, if you're curious, under the neath.

Sources: Matterport, 3dcapture.it, Venturebeat