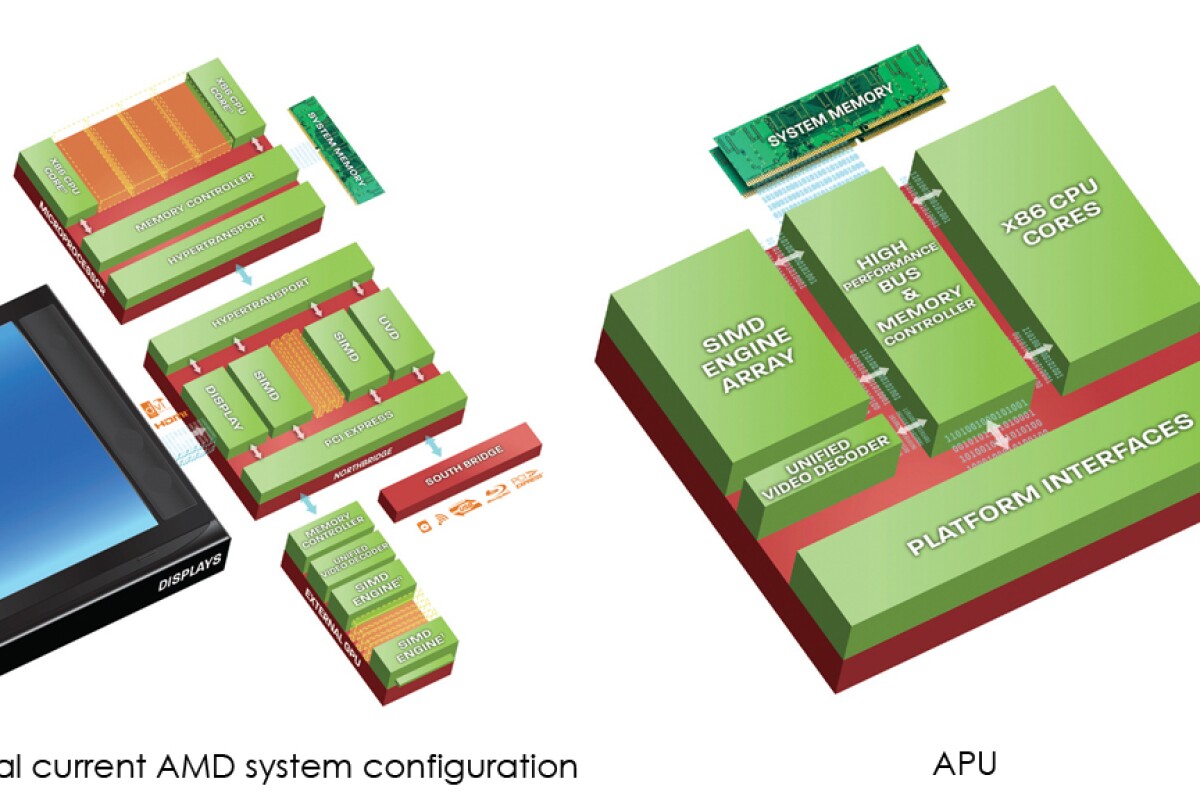

At Computex 2010 AMD gave the first public demonstration of its Fusion processor that combines the Central Processing Unit (CPU) and Graphics Processing Unit (GPU) on a single chip. The AMD Fusion family of Accelerated Processing Units (APUs) not only adds another acronym to the computer lexicon, but ushers is what AMD says is a significant shift in processor architecture and capabilities.

AMD says combining the CPU, GPU, video processing and other accelerator capabilities in a single-die design provides more power-efficient processors that are better able to handle demanding operations such as HD video, media-rich Internet content and DirectX 11 games – AMD hasn’t revealed the technical specs of the GPUs it will embed in its APUs, but has disclosed they will be DirectX 11 compliant.

Many of the improvements stem from eliminating the chip-to-chip linkage that adds latency to memory operations and consumes power - moving electrons across a chip takes less energy than moving these same electrons between two chips. The co-location of all key elements on one chip also allows a holistic approach to power management of the APU. Various parts of the chip can be powered up or down depending on workloads.

“Hundreds of millions of us now create, interact with, and share intensely visual digital content,” said Rick Bergman, senior vice president and general manager, AMD Product Group. “This explosion in multimedia requires new applications and new ways to manage and manipulate data. Low resolution video needs to be up-scaled for larger screens, HD video must be shrunk for smart phones, and home movies need to be stabilized and cleaned up for more enjoyable viewing. When AMD formally launches the AMD Fusion family of APUs, scheduled for the first half of in 2011, we expect the PC experience to evolve dramatically.”

The demonstration at Computex was the first public display of the chip that AMD has been working on for a while now. In it AMD emphasized that the new Fusion APUs are designed to simplify the task consumers face in choosing a PC that is right for their needs.

PC and PC component manufacturers have made this promise before but most consumers are still bamboozled by the acronyms and range of specifications they are forced to wade through when purchasing a new computer. So we’ll have to reserve judgment until we see if AMD can deliver on this front.

Computex 2010 also saw AMD unveil its “AMD Fusion Fund,” a program designed to make strategic investments in companies developing new, enhanced digital experiences that take advantage of the forthcoming AMD Fusion family of APUs.

One of the companies looking to take advantage of the benefits of the Fusion APUs, but probably doesn’t need any handouts from the Fusion Fund, is Microsoft, whose corporate vice president, original equipment manufacturer division, Steven Guggenheimer, joined AMD on stage at Computex.

“While visual computing has made incredible strides in recent years, we believe that the AMD Fusion family of APUs combined with Windows 7 and DirectX 11 will fundamentally change how applications are developed and used,” said Guggenheimer. “Applications such as Internet browsing, watching HD video, PowerPoint and more can enable more immersive, visually rich, and intuitive experiences for consumers worldwide.”

With their claims of improved performance and power efficiency the most obvious target for AMD’s new APUs are ultraportables, and that’s where they’ll most likely be showing up initially when AMD officially launches its Fusion family of APUs in the first half of 2011.